AI-Powered Warranty Fraud Detection: What Your Platform Should Catch

Key Takeaways

- Fraudulent and abusive warranty claims cost manufacturers 3–10% of total warranty program budgets — on a $10M program, that is $300K–$1M walking out the door annually

- Rule-based systems catch only 15–25% of fraud; AI-powered detection reaches 60–75% by analyzing patterns across customers, geographies, and time — not just claim-by-claim validation

- Gray market fraud, expiry-window clustering, and multi-claim customer patterns are invisible to traditional serial number lookup and duplicate checks

- The correct fraud pipeline has three lanes: auto-approve (no anomalies), manual review (anomaly signals), and auto-deny (hard validation failures only) — binary approve/deny creates both false positives and missed fraud

If your warranty program has never flagged a suspicious claim, that's not a sign your customers are unusually honest. It's a sign your detection is unusually weak.

Warranty fraud is one of those losses that hides in plain sight. It doesn't show up as a line item on a P&L. It gets absorbed into "warranty costs" and treated as a cost of doing business — until someone runs the numbers and realizes how much of that spend was preventable. Industry research consistently puts fraudulent and abusive warranty claims at 3–10% of total warranty program budgets (according to the Warranty Week 2024 Warranty Fraud Industry Report and corroborated by analysis from the Warranty Chain Management Conference). For a manufacturer running $10 million in annual warranty costs, that's $300,000 to $1 million walking out the door every year.

The harder truth: most of it isn't organized crime. It's opportunistic. A customer whose product is three months past warranty who submits a claim anyway. A secondhand buyer who never owned the product in the first place. A gray market unit that was purchased outside your authorized distribution network and is now being claimed against your warranty program as if it were a legitimate domestic sale. These aren't sophisticated fraudsters — they're people testing what your system will catch. And if the answer is "not much," they'll keep testing.

Fraud Detection Capabilities by Approach

| Detection Signal | Rule-Based System | AI-Powered Detection | Coverage |

|---|---|---|---|

| Serial number validity | Yes | Yes | Basic |

| Duplicate claims (same serial) | Yes | Yes | Basic |

| Out-of-warranty claims | Yes | Yes | Basic |

| Customer claim frequency patterns | No | Yes | Intermediate |

| Geographic anomalies | No | Yes | Intermediate |

| Expiry-window clustering | No | Yes | Intermediate |

| Serial lifecycle validation | No | Yes | Advanced |

| Gray market detection | No | Yes | Advanced |

| Counterfeit serial format detection | No | Yes | Advanced |

| Typical fraud catch rate | 15–25% | 60–75% | AI +35–50 pts |

Competitive Landscape

Registria and NeuroWarranty dominate the warranty administration space but offer limited fraud detection beyond basic rule-based validation. Dyrect and Claimlane add claim orchestration but lack AI-powered pattern analysis. These point solutions rely on serial lookup and duplicate checking — the table stakes that catch 15–25% of fraudulent and abusive claims. BrandedMark's fraud detection integrates AI pattern recognition directly into the claims pipeline, running customer-level pattern analysis, geographic anomaly detection, and timing analysis on every submission. By connecting fraud detection to the product graph (linked customer identity, serialized products, purchase channel data, and claim history), BrandedMark achieves 60–75% fraud catch rates without requiring separate specialist tooling.

What Traditional Detection Catches (And Misses)

Most warranty platforms perform the same three checks: serial number lookup confirms the product exists in the system, purchase date validation confirms the claim falls within the warranty window, and duplicate claim checking flags if the same serial has been submitted before. These are necessary baseline controls, but they catch only 15–25% of fraudulent and abusive warranty claims (Warranty Week, 2024 Warranty Fraud Industry Report). The fundamental limitation of rule-based detection is that it operates claim-by-claim, evaluating each submission in isolation. A single claim from a customer who has filed five claims in three months looks identical to a first-time legitimate claim when evaluated individually. A gray market unit with a valid serial number from an authentic production run passes every rule-based check because the fraud is in the distribution mismatch, not the serial itself. A wave of claims submitted in the 29-day window before warranty expiry produces no individual red flags — each claim looks legitimate on its own, and the anomaly only becomes visible when the submission pattern is analyzed across the cohort. Rule-based systems cannot see patterns. They have no mechanism for comparing a new claim against the behavior of the customer who filed it, the geography where the product was distributed, or the statistical shape of submissions across a product line over time. That is the detection gap AI-powered systems are built to close. See also: warranty registration benefits, how to measure product identity ROI, and connected product analytics.

What Falls Through the Gaps

The serial number exists — but the product is a gray market import. Your lookup confirms the GTIN and serial are valid. What it doesn't check is whether that unit was ever sold into your North American distribution network, or whether it was manufactured for the EU market and imported outside your authorized channels. Gray market units often carry valid serial numbers from legitimate production runs. The fraud isn't in the serial — it's in the distribution mismatch.

The claim isn't a duplicate — but the customer has filed five of them. Rule-based systems check whether this specific serial has been claimed before. They rarely check how many claims a given customer account, email address, or household address has submitted in the past 90 days. A customer with five claims on five different serials across three months is a pattern worth investigating. No individual claim triggers a flag.

The date is within warranty — but only just. Submission spikes in the final 30 days before warranty expiry are well-documented in claims data. A customer who has owned a product for 11 months and 15 days and suddenly experiences a defect is not inherently suspicious. But a cohort of 400 customers all submitting claims in the 29-day window before expiry, across the same product line, across multiple regions, is a signal. No single claim looks wrong. The aggregate pattern is telling a story.

The claim looks legitimate — but the region doesn't. If 80% of your warranty claims for a product line that was only distributed in Western Europe are being filed from postal codes in Eastern Europe or shipped to freight forwarders in Miami, that geographic mismatch is meaningful. Rule-based systems don't map claim origin against distribution geography.

What AI-Powered Detection Adds

AI-powered warranty fraud detection changes the fundamental unit of analysis from individual claims to behavioral patterns across customers, geographies, and time. Instead of evaluating each submission against a fixed ruleset in isolation, machine learning models build a continuous picture of what legitimate claim behavior looks like across a warranty program and flag statistical deviations from that established baseline. The practical detection rate improvement is substantial: AI-assisted systems reach 60–75% fraud catch rates, compared to 15–25% for rule-based approaches, an improvement of 35–50 percentage points on the same claims volume. This is achieved through four overlapping detection layers. Customer-level pattern recognition identifies accounts filing claims at anomalous frequency or with suspicious serial clustering. Geographic anomaly detection maps claim origins against known distribution footprints, surfacing gray market activity that passes every serial-level check. Timing analysis detects statistically abnormal submission clusters — expiry-window spikes that look normal claim-by-claim but reveal a pattern at scale. Serial lifecycle validation checks whether a unit's full history is consistent with the claim being made, catching counterfeit serials and units reported as returned or destroyed. Together these layers catch the fraud categories that rule-based systems are structurally blind to.

Pattern Recognition Across Customer History

An AI layer on your claims pipeline tracks behavior at the customer identity level, not just the serial level. A customer filing their second claim in 60 days on a different product isn't necessarily fraudulent. A customer filing their fifth claim in three months, each on a different serial number, each submitted within the first 10 days of the warranty window — that cluster is worth human review. The model learns what normal claim frequency looks like for your customer population and surfaces outliers automatically.

Geographic Anomaly Detection

Claims data has a natural geography that reflects your distribution footprint. Products sold through your US retail partners generate claims with US zip codes, US purchase receipts, and US return shipping addresses. When claims start arriving for products with US serial numbers from addresses in regions outside your distribution network — or when a disproportionate share of claims for a specific SKU cluster around known freight forwarder addresses — the model flags the geographic mismatch for investigation.

This is particularly valuable for identifying gray market activity. The product may be genuine. The serial may be valid. But the claim is being filed against a warranty program the product was never sold into.

Timing Analysis and Expiry-Window Clustering

Legitimate warranty claims are roughly evenly distributed across the warranty period, with some expected weighting toward early claims for out-of-box failures and a modest uptick toward the end of the warranty term. What looks statistically abnormal is a sharp spike concentrated in a narrow window just before expiry — particularly when that spike is concentrated in a specific geography, a specific retail channel, or a specific product variant.

AI models trained on your historical claims data can distinguish between the expected end-of-warranty uptick and a statistically anomalous submission cluster. The signal isn't any individual claim. It's the shape of the distribution.

Serial Format and Lifecycle Validation

Beyond confirming that a serial number exists, AI-assisted validation can check whether a serial's lifecycle makes sense. Has this unit been reported stolen or decommissioned? Does the claimed purchase date match what your supply chain data shows for when units with that serial range were shipped to retail? Is the serial number format consistent with your current production encoding, or does it match a format used by a known counterfeit operation?

This layer catches fraud that passes basic lookup: counterfeit units with plausible-looking but invalid serials, units claimed as sold new that your records show as returned and destroyed, and serials reported in multiple simultaneous claims under different customer identities.

The Data You Need to Make This Work

AI fraud detection performs only as well as the data it runs on. Deploying a machine learning model against thin or inconsistent data produces noise, not detection signal. Four data elements form the minimum viable foundation for effective AI-powered warranty fraud detection. First, serialized product identity: every unit needs a unique serial — not a product model code or a batch identifier. SGTIN-level serialization (GS1 GTIN plus a unique serial per unit) gives the system the granularity to track individual products from manufacture through sale to claim. Without it, you can check if a model is under warranty; you cannot check if this specific unit has already been claimed. Second, customer identity linked to product at registration: fraud detection at the customer pattern level requires knowing which customer owns which serial. This means capturing name, contact details, and proof of purchase at warranty registration and linking them to the serial at that moment. Third, purchase channel data: knowing which retailer, which region, and which distribution channel a unit was sold through is the foundation for geographic anomaly detection. A unit sold through a UK retail partner being claimed from a US address is a meaningful signal — but only if the channel data exists to make the comparison. Fourth, full claim history per customer and per serial: every submission, whether approved, denied, or flagged, must be stored against both the customer record and the product. This historical record is what the model trains on and queries when evaluating each new claim.

Building Fraud Detection Into the Warranty Flow

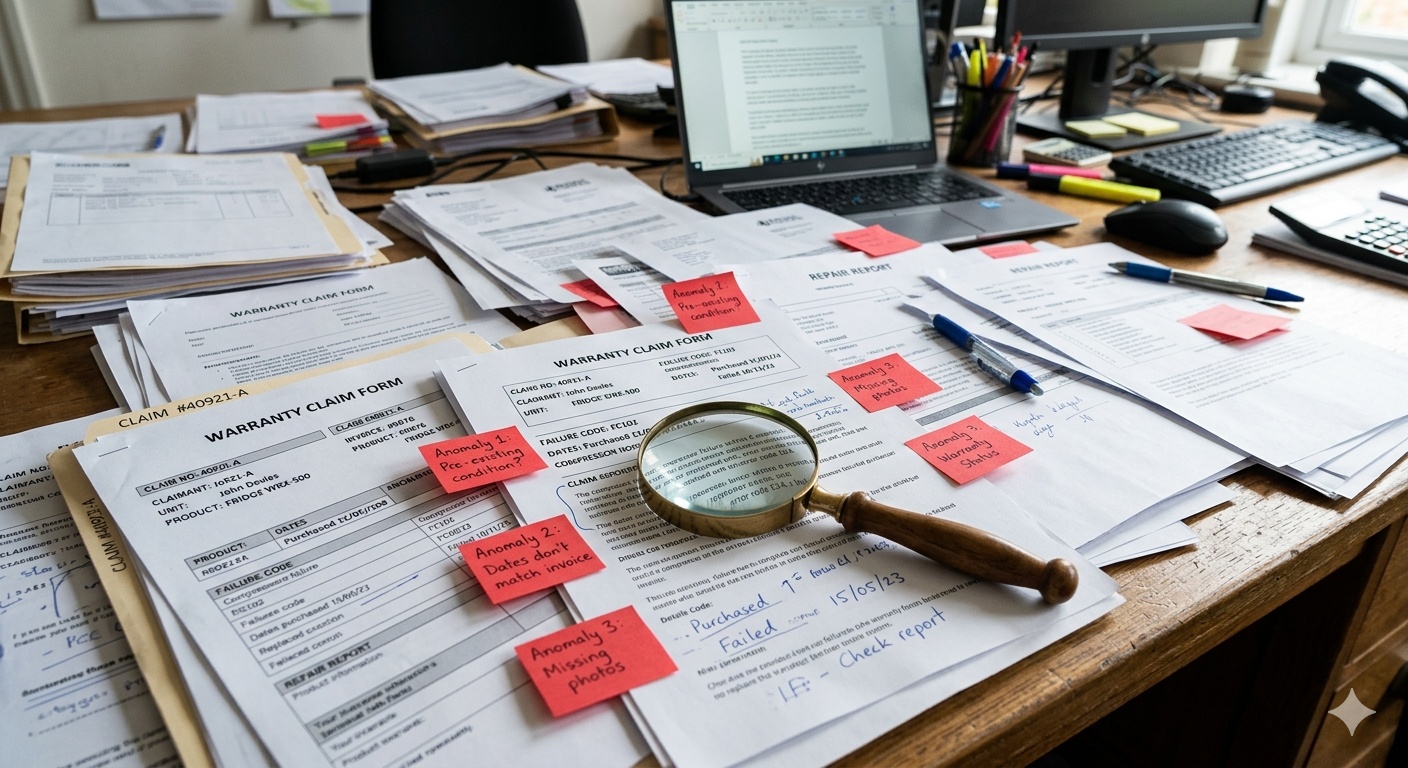

The most important design principle for effective warranty fraud detection is architectural: fraud detection must be a layer in the claims pipeline itself, not a separate reporting tool that warranty managers audit periodically. By the time an after-the-fact audit surfaces a fraudulent pattern, the claims have been paid and the money is gone. Detection needs to run at the moment of submission, automatically, on every claim. In practice, this means every claim submission triggers a synchronized set of automated checks — serial validation, customer pattern analysis, geographic anomaly detection, and timing analysis — before the claim reaches a human. The routing logic then determines what happens next. Claims that pass all checks without anomaly signals move to auto-approval, completing the process without requiring manual attention and improving resolution speed for legitimate claimants. Claims that trigger one or more anomaly signals are routed to a manual review queue with the specific flags annotated: the reviewer sees exactly what triggered the hold and can make an informed judgment rather than starting from scratch. This integration into the pipeline is what creates the operational leverage of AI-powered detection — the volume of clean claims is handled automatically, and human review capacity is concentrated on the cases most likely to warrant denial. For how to streamline end-to-end claims processing, see warranty claim automation and connected product security.

The Review Queue, Not the Reject Pile

A common mistake is treating fraud detection as binary: approve or deny. That approach creates two problems. Legitimate claims get denied because they triggered a false positive. And genuinely fraudulent claims get approved if they don't trigger a flag. Neither outcome is acceptable.

The better model is a three-lane pipeline. Auto-approve covers claims with no anomaly signals. Manual review covers claims with one or more anomaly signals that warrant human judgment. Auto-deny is reserved only for claims that fail hard validation — serial numbers that do not exist in your system, serials that have already been successfully claimed, or serials that match known counterfeits. The fraud detection layer is not a decision engine. It is a prioritization tool that puts human attention where it is most likely to be needed.

Continuous Improvement Through Outcome Feedback

The model improves when reviewers close the loop. When a flagged claim is reviewed and confirmed as legitimate, that outcome feeds back into the training data — the model learns that this particular pattern combination does not reliably predict fraud in your specific customer population. When a flagged claim is confirmed as fraudulent, the signal is reinforced. Over time, the false positive rate drops and the detection rate improves. But only if the review queue is connected to model feedback, not just a dead-end decision log.

This feedback loop is also what separates a useful fraud detection system from a liability. A system that flags and denies claims without human review — and without a mechanism to surface and correct false positives — will generate customer complaints, regulatory exposure, and trust damage that outweighs the fraud savings. The AI is the detection layer. The human is the judgment layer. Both are necessary.

What Good Looks Like

A mature AI-powered warranty fraud detection system, running against a well-structured claims pipeline with serialized product identity and linked customer registration data, produces a clear set of measurable outcomes within 12–18 months of deployment. Fraudulent and abusive claim rates, which typically start at 3–10% of total warranty spend, should decline toward 1–3% as the model calibrates against your specific customer population and claim history. Auto-approval rates for legitimate claims should reach north of 85%, because clean claims move through the pipeline faster than manual review ever allowed. Manual review queues become smaller in volume but higher in signal quality: fewer total claims require human attention, but the proportion of reviewed claims resulting in denials increases as the model learns to surface only genuine anomalies. The false positive rate — legitimate claims flagged incorrectly — should decrease over time as reviewer decisions feed back into model training. Crucially, the customer experience for legitimate claimants improves rather than degrades. Auto-approval means faster resolution: a clean claim that took two to three business days for manual serial validation now completes in minutes. The fraud prevention infrastructure and the claimant experience improvement are not in tension. A well-built system delivers both.

Fraud Detection Is a Platform Feature, Not an Add-On

Effective warranty fraud detection cannot be bolted on after a claims platform is already running. By the time an after-the-fact audit identifies a fraudulent pattern, the claims have been paid, the money is gone, and the data trail is cold. Detection must be structural — built into the claims pipeline at inception, powered by the same serialized product identity and customer registration data that a well-run warranty program is already capturing as standard practice. Treating fraud detection as a separate specialist tool that warranty teams access periodically is how organizations reach 15–25% fraud catch rates and accept the remainder as uncontrollable cost. Treating it as a pipeline layer — automated, real-time, running on every submission — is how organizations reach 60–75% catch rates without adding headcount. The data foundation required for AI fraud detection is not an additional investment if the warranty program is already operating on serialized identity and linked customer registration. It is the same data, applied through an additional analytical layer. For manufacturers running warranty programs above $1 million annually, the ROI case for AI-powered detection is straightforward: a 5% improvement in fraud catch rate on a $5 million program recovers $150,000 to $250,000 annually. The fraud your current system cannot see is not undetectable. It is simply not being looked for in the right way.

Further reading: Why Warranty Registration Still Matters — Warranty Analytics: What Your Data Should Tell You — Connected Product Analytics — Connected Product Security

UK Consumer Rights Note

UK consumers have statutory rights under the Consumer Rights Act 2015 that exist independently of any manufacturer warranty and cannot be reduced or replaced by warranty program terms. The Act provides three layers of protection: a 30-day right to reject faulty goods and receive a full refund, a 6-month repair or replacement period during which the burden falls on the retailer to prove the fault was not present at the time of purchase, and a long-stop claim period of up to 6 years from purchase for faults that emerge over time. Manufacturer warranties are supplementary coverage that may extend or add to statutory rights, but they are legally distinct from them. A manufacturer warranty program that denies a claim does not extinguish a consumer's statutory right to seek remedy from the retailer under the Act. For manufacturers operating warranty programs in the UK market, this distinction is operationally relevant: fraud detection systems must be designed to avoid denying claims that may carry independent statutory entitlement, and review processes should account for the possibility that a denied warranty claim may still be pursued through statutory channels. For authoritative guidance on UK consumer rights, see Citizens Advice and GOV.UK Consumer Rights Act.

FAQ: AI-Powered Warranty Fraud Detection

What is considered warranty fraud, and how much of it is actually intentional?

Warranty fraud ranges from intentional organized crime to opportunistic abuse. Most of it falls into the latter category: a customer whose product failed after 13 months claiming it's still under the 12-month warranty, a secondhand buyer submitting a claim without having registered as the owner, or a gray market unit purchased outside your authorized distribution network being claimed as if it were a legitimate domestic sale. Industry research consistently shows that fraudulent and abusive claims cost manufacturers 3–10% of their warranty program budgets. For a manufacturer with $10 million in annual warranty costs, that's $300,000 to $1 million per year. Most of these fraudsters are not criminals — they're customers testing what your system will catch. If your detection is weak, they will continue testing.

Why can't traditional rule-based fraud detection catch gray market activity?

Rule-based systems can confirm that a serial number is valid and that it hasn't been claimed before. They cannot determine whether that serial was ever sold into your intended distribution channel. A gray market unit may have a completely legitimate serial number from an authentic production run, but it was manufactured for the EU market and imported into North America outside your authorized channels. Traditional detection sees a valid serial in your database and approves the claim. AI-powered detection compares the unit's claimed purchase channel and origin against your known distribution footprint, flagging geographic mismatches (e.g., products distributed only in Western Europe being claimed from Eastern Europe) that suggest gray market activity.

How should AI-flagged claims be handled to avoid denying legitimate warranty claims?

The best approach is a three-lane pipeline rather than binary approve/deny logic. Lane 1 (auto-approve) covers claims with no anomaly signals, moving them through the system quickly. Lane 2 (manual review) covers claims with one or more anomaly signals that warrant human judgment — the AI flags these for expert review, but does not automatically deny them. Lane 3 (auto-deny) is reserved only for claims that fail hard validation: serials that don't exist in your system, serials already successfully claimed, or serials matching known counterfeits. Crucially, the manual review queue must be connected to outcome feedback so the model learns from human decisions and improves over time. This prevents the system from generating customer complaints and regulatory exposure from false positives.